Bluesky was built with the belief that social media should serve the people using it, not the other way around. Their Trust & Safety systems are built with a human-centered approach, keeping user safety at the core of everything they do. Partnering with Musubi was a natural extension of that philosophy.

In 2025, Bluesky grew nearly 60% from 25 million to 41 million users, with nearly 10 million user reports to moderate. Spam campaigns grew in sophistication alongside the platform, and Bluesky needed a solution that would scale with them.

Aaron Rodericks, Bluesky's Head of Trust & Safety, explains:

“We grew from 3,000 users a day to a million users a day. With this step function in new users came people targeting those users and trying to take advantage of those users — massive waves of fake accounts, troll accounts, spam and scam accounts."

Aaron and Bluesky partnered with Musubi to implement an approach to automation that fit their values as much as their needs. Rather than replacing moderator judgment, Musubi learns directly from it, applying human insights to spam reports at a speed and scale that no team alone could match.

The Challenge: Keeping Up With Spam at Scale

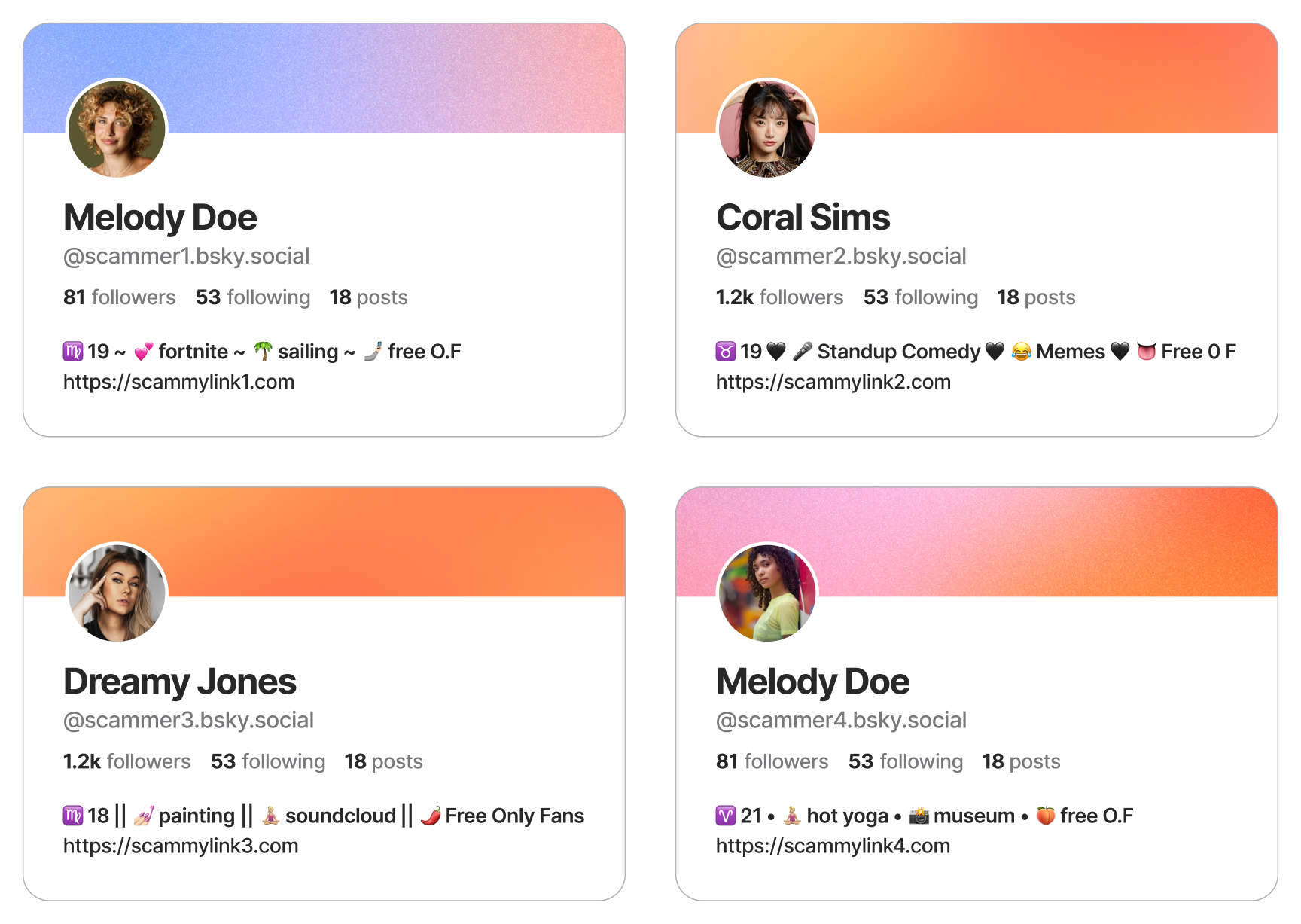

Spam campaigns on social platforms follow a familiar playbook. Bad actors create thousands of accounts in waves, building realistic-looking profiles to slip past initial detection, then mass-reply to trending posts with crypto scams, phishing links, and adult content, mutating just enough to evade simple filters. Each wave generates more reports than a human queue can clear quickly.

For Bluesky, the bottleneck was time. With manual review, a spam account could stay live for over four hours before anyone took action. During that window, a single campaign could reach thousands of users and generate hundreds of reports.

And the threat kept evolving as bad actors learned what the platform's defenses could and couldn't catch.

"With time and with success, we've attracted adversaries that are focused on testing every single boundary we have. We are most definitely seeing constant — if not daily, sometimes weekly — evolution in some of these bad actors to evade all of our detections."

— Aaron Rodericks, Head of Trust & Safety, Bluesky

Adding headcount wasn't the answer. At that volume and velocity, the problem wasn't effort, it was that human moderators reviewing individual tickets in isolation were working without the full picture. What the team needed was a system that could see what they couldn't, act on it immediately, and keep learning as tactics changed.

The Solution: Automation That Learns From Your Best Moderators

What drew Aaron to Musubi wasn't just the promise of faster decisions, it was a fundamentally different idea about how those decisions should be made.

"Everyone was trying to do AI the exact same way — hey, we can look at one piece of content and make an accurate policy assessment for you. Musubi was literally the only example that I had looked at in which you were doing a whole account assessment."

— Aaron Rodericks, Head of Trust & Safety, Bluesky

Content-level analysis tells you what someone posted. Account-level analysis tells you who they are — their behavior over time, their metadata, the patterns that connect them to other accounts the team has already actioned. For a platform where context determines whether something is a policy violation, that's the difference between a tool that flags posts and one that actually understands enforcement.

- When a moderator takes action on a report, Musubi analyzes the account signals associated with that decision, such as behavioral patterns, content, metadata, and account history.

- Musubi then builds an increasingly accurate model of what enforcement looks like on Bluesky specifically.

- Once Musubi’s model has sufficient confidence in a pattern, it begins handling matching reports automatically, in real time.

- As spam campaigns evolve, the model updates continuously alongside moderator decisions, and is applied automatically for future reports.

"Whole account analysis with Musubi gave us the possibility and the opportunity to take more context into account in the analysis. Moderators aren't particularly talented at saying this account is a spam account looking at a sample of one — having one system that's continually training on the type of accounts that we take down, and seeing the patterns between those, that was the real advantage."

— Aaron Rodericks, Head of Trust & Safety, Bluesky

Every automated decision that Musubi makes is grounded in a human one. The system doesn't impose an external definition of what a policy violation looks like, because it learns Bluesky's definition, from Bluesky's team, and applies it at scale. And because it learns from consistent patterns across the whole moderation team rather than any single person, it reflects their collective judgment rather than individual variation or off days.

The proactive layer follows from the same logic. Once Musubi understands the profile of a policy-violating account, it can assess new sign-ups and early interactions before any report is filed, stopping bad actors before they reach users, rather than after.

The Results: Scale rooted in human intelligence

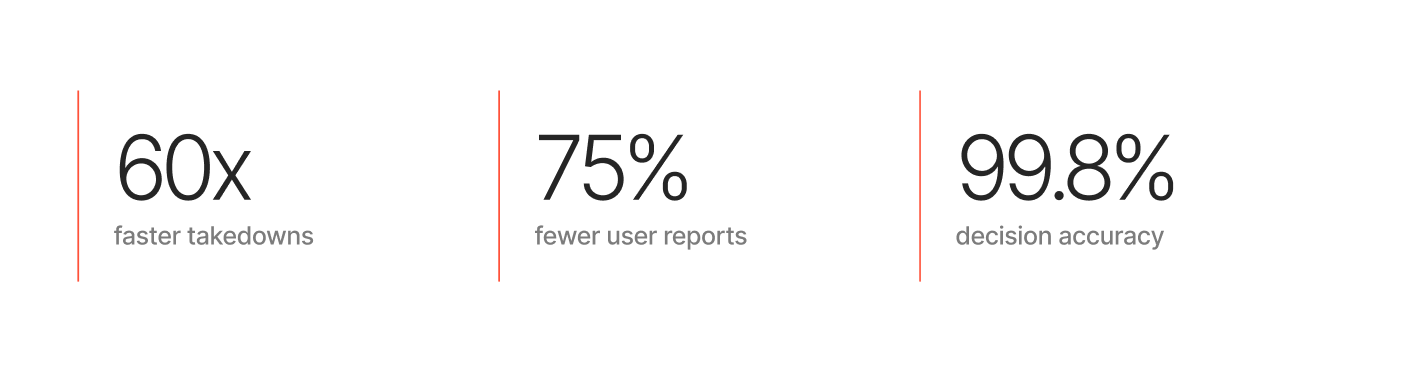

60x faster takedowns.

Before Musubi, spam accounts could stay live for over four hours while reports worked their way through a manual queue. With Musubi handling thousands of high-confidence decisions automatically in just around 10 seconds, Bluesky’s median time-to-action dropped to under four minutes. That compression in response time directly limits what any spam campaign can accomplish, because the window to reach users, generate reports, and cause harm shrank significantly.

75% fewer user reports per banned account.

Accounts taken down by Musubi averaged 1.6 user reports before action was taken, compared to 7.0 for human-moderated cases. The reduction reflects proactive detection: users weren't filing reports for content they never saw. Misleading content reports fell month over month, even as the user base continued to grow.

99.8% decision accuracy.

Musubi decisions were appealed and overturned at a rate of 0.02%, compared to 0.57% for human moderators — a 96% lower reversal rate. Because Musubi learns from consistent patterns across the full team rather than any individual, it reflects their collective judgment at its best, not at its most variable.

85% cost savings compared to human moderation alone.

Bluesky was able to scale enforcement rapidly without a proportional increase in cost, keeping moderation sustainable as the platform grew.

What Musubi Made Possible

Now that Musubi is handling high-confidence spam decisions reliably, Bluesky's moderators aren't working through a queue of repetitive, high-volume cases. They're focused on the work that actually requires them: novel harms, policy edge cases, escalations, and quality assurance that makes the whole system better over time — a closer match to Bluesky's moderation philosophy, and a better use of some of the most experienced trust and safety practitioners in the industry.

Every quality decision a moderator makes feeds back into Musubi's model, which means the system keeps improving as the team does its best work. Moderation capacity grows with the platform, without requiring a proportional increase in headcount, and the defense gets more accurate over time rather than drifting.

"It's really nice to work with Musubi because they keep on evolving their product and their offerings — better and better over time, more transparent and giving you more insight. And having the knowledge that the Musubi folks have experience at scale means that I know that they've operated at the kind of scale that I'm going to deal with."

— Aaron Rodericks, Head of Trust & Safety, Bluesky

With Musubi, Bluesky’s Trust & Safety team has built a strategic defense:

- Bad actors quickly learn that the platform's defenses are swift, accurate, and constantly adapting, making them a far less attractive target.

- Bluesky’s moderators are able to focus on the complex edge cases and emerging harms that truly require their skills, instead of making repetitive decisions on spam.

- The platform is able to keep up with rapid growth and scale, without worrying about moderation cost or hiring massive moderation teams.

Musubi acts as a force multiplier, scaling Bluesky’s expertise and ensuring the platform remains a safe, trusted, and open space for conversation.